Recently a client asked me how I prove application testing is worth the money? For me, it’s obvious that testing is a vital part of the development process. Without it, there is little chance for a successful, high-quality product. So how do I prove it to someone who isn’t familiar with the reality of the business?

It is a fact that in almost any aspect of life higher quality comes at a higher price. Do you want to have a great, luxurious car? You need to pay more. Do you want to have a tailor-made suit? You better dig deep into your pocket. Software development is no different in this matter. If you want to have a fast, reliable and threat-secure application you need to invest in testing. But – in the long run — is it really worth the expense? First, we need to understand how an application development cycle looks and how it affects the bug-fix cost.

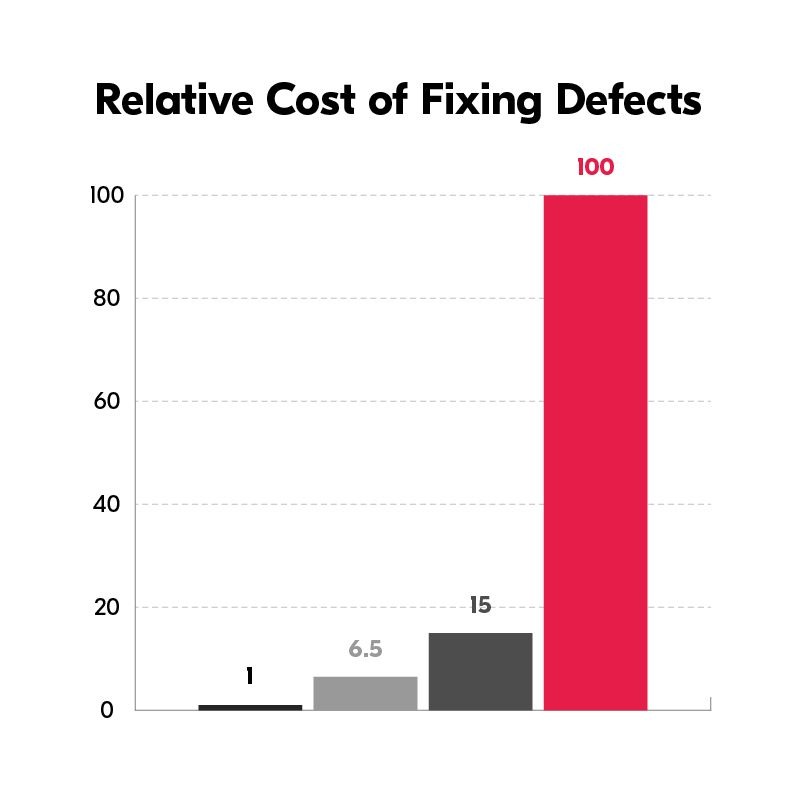

The software development cycle usually starts with requirements & design phases. This is where you outline the scope of the application, its main functionalities, visuals, architecture, test scope and any other requirements that you may have. This is a very important phase because any issue at this point may result in complications later on. If a developer overlooks a requirement it may be considerably more expensive to fix it later. If you decide to change a requirement at a later stage then the other parts of the application may get affected in a way you could have not anticipated. Fixing an issue at this stage is almost costless – all you need is to rethink and rewrite it or add some new requirements.

The next phase is the actual coding part. Web application development teams usually consist of several people who measure the code in thousands of lines. Each line of code is a potential place for a defect. The more complex the software is the more interdependencies between modules, integrations and architecture pieces there are. Developers are also human and in this jungle of advanced logic, code standards, abstract end-user ideas and external system integrations it is very easy to overlook something. They also have to consider the fact that different browsers/devices often operate in a different manner. There are also changing requirements and bug fixes that require adjustments in code already written. It often happens that one part of the code affects some other unrelated functionalities. Finally, there is also a business pressure to meet the agreed deadlines which can sometimes make the developers work in a hurry. And, this is just the tip of the iceberg. An issue usually found at the development stage is fairly easy to fix as the developer is aware of the code he has just written and it doesn’t take much time to isolate the specified issue. Still, it’s much more costly than a simple change in the requirements phase.

In agile development methodologies testing happens alongside the development process. You may ask yourself a question: if testing takes place, there should be no issues on production, right? Well, not exactly. First of all, it is not feasible to test all the possible inputs and outputs of a complex application. Moreover, it is not possible to test the application for all browser and device combinations in all possible versions. Finally, the user’s creative ways are sometimes futile to predict – it is not possible to come up with all the things that a user may think of doing and prevent them from happening.

There is also a matter of maintaining the application. At times, the new software version may clash with the application and cause bugs. From time to time, the external integrations get updates without updating the code. This, in turn, may cause the app to malfunction. Although it may sound strange sometimes we also choose not to fix some of the bugs. If we know that a defect only causes a minor discomfort for a limited number of users it isn’t sometimes worth fixing because the costs would surpass the actual damage caused by this issue.

Despite the fact that a well-chosen test strategy may minimize the project risks and eliminate all critical issues, it still cannot guarantee an issue-free project. So what is the cost of fixing a bug at the testing stage? It’s even higher than in the development stage. Usually a tester, a developer and, sometimes, a PM decide on the priority of the bug. It is also much more difficult for a developer to diagnose the root cause of the issue. Is it architecture? Does it occur only for a certain set of data? Is it a bug within the code? Is it possible to reproduce it on my machine or is it only test environment related? A developer has to find an answer to these questions and it takes more time than investigating his own code and functionality.

The last phase of the development cycle is when an application is already deployed to production. The cost of fixing bugs on production is significantly higher, sometimes it can be incredibly large. Even setting aside the hard-to-measure costs of damage to company reputation, users leaving the application or customers not being able to complete their purchases – the cost of fixing the bug is higher. The issues that users find are usually much more difficult to reproduce in the development environment. They’re often connected to a specific production architecture or database state. On top of everything, the users usually don’t provide as many details as a qualified software tester which prolongs the investigation. The table below presents some real-life examples revealing how much an issue can affect a company’s revenue.

So is it even possible for software to be bug-free? Well, let me answer that question with another question. Do big companies with a huge amount of resources – like Apple, Google or Facebook provide bug-free software?

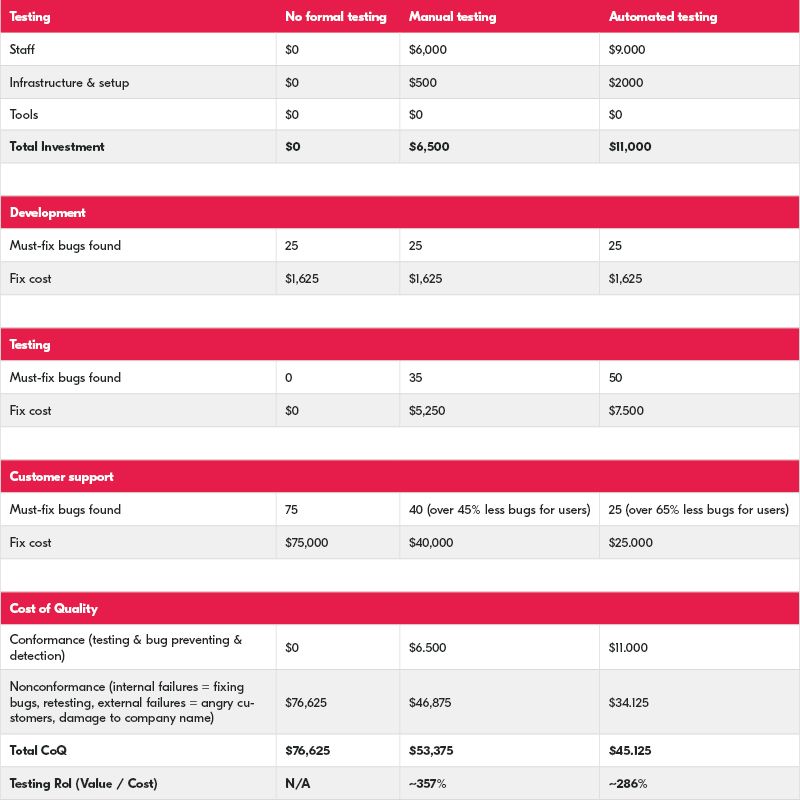

Knowing all that and based on the above explanations and estimated bug-fix costs provided by IBM data we can perform an example Return On Investment(ROI) analysis for testing.

Let’s try to analyze an ROI example for a 3-month project. First, let’s make the following assumptions:

1. Assuming that bug-fix cost during the design phase is $10 using the above IBM estimated costs we get:

- Design bug-fix cost – $10

- Development bug-fix cost – $65

- Testing bug-fix cost – $150

- Production bug-fix cost – $1000

2. There are 100 must-fix bugs during a 3-month period and they all cost approximately the same.

3. None of the issues were found during the design phase (which could have lowered the total cost even more)

Now we have to estimate what the cost of manual tests would be and test automation as well as the number of bugs to find in each phase. Assuming that basic manual application testing costs around $2000 per month (that’s the salary of a specialist) and adding some minor infrastructure fee for the test environment we receive a total investment of $6,500. When it comes to test automation let’s increase the specialist’s salary as well as the infrastructure & setup cost – then we get $11,000.

Let’s estimate that out of 100 total bugs 25 of them will be found by the developers (this is a constant number no matter whether we carry out tests or not). We can estimate that during the testing phase the testers will find 35 issues while performing manual tests and 50 when we add automation on top of that.

The rest of the bugs will be found by the end-users. Now we can count the total cost of quality as the sum of conformance (cost of ensuring that the app conforms to quality requirements) and non-conformance (money that needs to be spent for not conforming to the quality requirements). Finally, we can count the test ROI as the value brought by testing divided by the cost of actual testing.

This example certainly contains numerous assumptions but you can use it in an actual project. It shows how we can actually estimate ROI. I believe that it provides some insight to whether application testing costs are worth the money. Even though hiring a testing team is, in fact, an expense, when you look at your entire application development cycle you will view it as an asset. Testing will spare you a lot of future quality expenses. This also causes things like user frustration, clients leaving and even company reputation damage – factors which are difficult to measure in terms of money.

See also:

- Good reasons not to skimp testing

- Client’s engagement in an application development project? What involvement is needed for successful delivery?

- Web Application Testing: Terms of Quality in Web Applications

- Great application development starts with a product design workshop

[contact-form-7 id=”13387″ title=”Contact download_8_reasons”]